A Guiding Framework to Improve Classroom Assessment Practices

Developing Criteria for Selecting High-Quality State and District Assessment Literacy Initiatives

This is the first in a series of CenterLine posts based on the assessment and accountability issues addressed this summer by our 2022 summer interns and their Center mentors. Yelisey Shapovalov from James Madison University worked with Carla Evans to develop criteria for evaluating the quality of state and district classroom assessment literacy initiatives.

My internship at the Center for Assessment involved creating a screening tool to help state, district, or school leaders select a high-quality assessment literacy professional learning program before they launch assessment literacy initiatives.

The need for quality K-12 classroom assessment practice has persisted for decades (DeLuca & Johnson, 2017; Popham, 2009; Stiggins, 1991). Ideally, teachers would support student achievement by leveraging assessment literacy — the knowledge and ability to design, select, adapt, interpret, and use educational assessments in the classroom to make better educational decisions that improve student learning. However, the promise of quality classroom assessment practice has not been realized and many in-service teachers need supplemental professional learning.

To address this need, many states, organizations, testing vendors, and educational institutions are creating educator assessment literacy professional learning modules and other resources for K-12 teachers and leaders. However, it is unclear if these professional learning modules and resources employ high-quality content and implementation plans to effectively support the intended goals of state or local education agencies.

Thus, the purpose of my internship involved creating a screening tool to help state, district, or school leaders evaluate the quality of content and implementation plans before they launch assessment literacy efforts.

Reviewing the Research on Assessment Literacy and Teacher Professional Development

Two research questions guided the development of the screening tool:

- What is the foundational content knowledge and skills K-12 educators need to be considered ‘assessment literate’ based on the research literature and experts in the field?

- What is best practice in implementation of in-service K-12 educator professional development according to reviews of the body of recent literature?

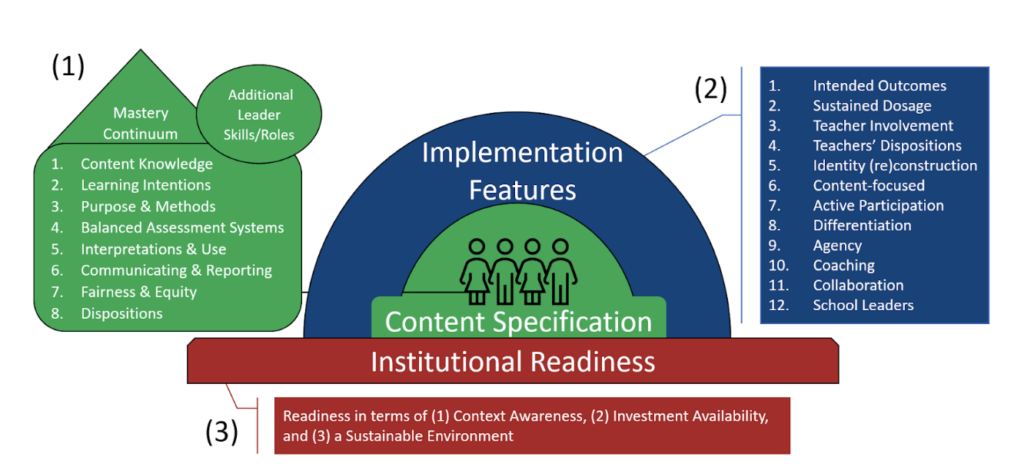

I conducted a systematic literature review to answer the research questions. As displayed in Figure 1, I synthesized the literature review analysis into a guiding framework based on recurring themes. Then, I used the guiding framework to inform the development of a screening tool, which I will present in a subsequent blog post.

The Guiding Framework for Developing the Screening Tool

The guiding framework organizes the criteria for evaluating the quality of state and district/school assessment literacy initiatives according to three key elements essential for any K-12 in-service professional learning initiative: (1) the content specifications of a professional learning program, (2) the implementation features of a professional learning program, and (3) the institutional readiness, or school context, within which the professional learning program will be conducted.

Content specification domains are related to professional learning program outcomes for K-12 teachers and leaders that comprehensively define educator assessment literacy capacities for educational assessment (classroom and other assessments such as state tests and school- or district-based assessments; see figure for a listing of the domains). The foundational knowledge, skills, and dispositions all fall along a mastery continuum where educators move from novice to expert over time with practice, reflection, and feedback.

As suggested by the literature review analysis, leaders (e.g., school principals, instructional coaches, district leaders, etc.) need additional knowledge and skills based on their roles within the educational system, the critical nature of supporting teachers in their efforts, and the incoherence that can be created by decisions made about assessments at the school or district layer.

Implementation feature domains are the 12 research-based design features that should be present in a professional learning program to support the attainment of program outcomes, which should be clearly defined with a thorough plan for meeting intended outcomes (see figure 1). While educators should be able to adapt outcomes and plans to fit their local context and content, a robust implementation plan with these high-leverage features is necessary to achieve the ambitious goals of a comprehensive assessment literacy initiative.

Institutional readiness domains are related to the prerequisite conditions within the school and/or district that need to be met to implement a professional learning program with fidelity and appropriate adaptation (see figure 1). The first two elements (content specifications and implementation features) align with our research questions directly, but the literature review analysis suggested that the specific context and readiness of the educational institution are important factors for successfully implementing the professional learning program and producing the intended outcomes related to educator assessment literacy.

More detailed information on the guiding framework will be available in a subsequent blog post where I will describe the process of creating and pilot testing the screening tool and creating a Research Synthesis report. Our hope is that the research-based, user-friendly screening tool will help state and district leaders make informed decisions when selecting an assessment literacy professional learning program that will lead to better educational assessment practices and improved student learning.