In Search of the “Just Right” Connection Between Curriculum and Assessment

Considering Options Between Curriculum-Specific and Curriculum-Agnostic State Assessment

State summative assessments are intentionally designed to be curriculum-agnostic. The design of these state assessments ignores the various instructional materials and other curriculum implementation decisions on purpose, instead focusing on the disciplinary standards. The underlying logic of this approach makes sense: each discipline’s standards represent a (relatively) agreed-upon set of end-of-instruction goals for learning, and while there may be many paths (i.e., curricula) to achieving that goal, the point of state assessment is to determine whether the goal was accomplished.

In making these determinations, the state assessments are meant to provide fair comparisons of student achievement so that schools can be identified for support. We know that once a school is identified for support under a state accountability system, those providing support have to do a great deal of work to connect the signals provided by the state assessment to the curriculum within a school. At best, this work is onerous and requires savvy educators and leaders working together across divisions within schools and districts to translate what the results of the state assessment mean for instruction and the curriculum that supports it. At worst, state assessments can actually advance practices that detract from learning.

A solution to instructional irrelevance? Intentionally connect state assessment to curricula that guide what, how, and when students learn in the classroom.

The Curriculum-Specific Assessment Model

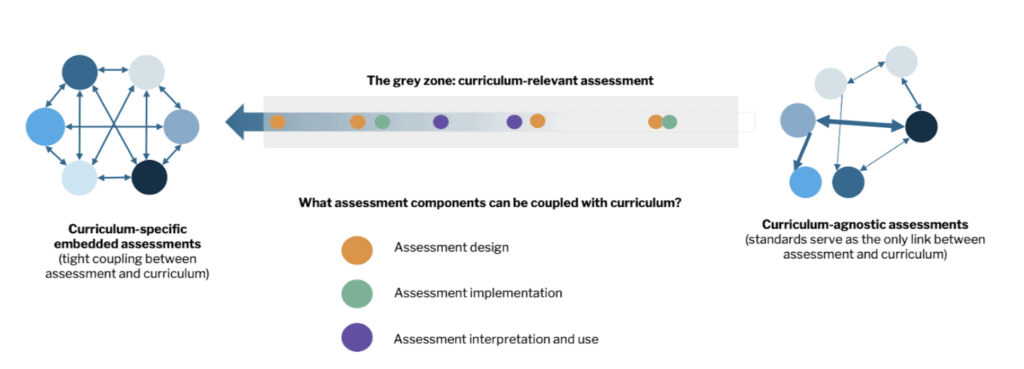

The most obvious relationship between assessment and curriculum is the closest (and likely the least practical) one. If curriculum-agnostic state assessments represent one extreme end of a spectrum, then the polar opposite is a curriculum-specific (and potentially a curriculum-embedded model), in which the state assessment itself is directly tied to activities that are part of specific individual curricula.

Taken to its extreme, this model likely results in as many state assessments, or forms of state assessment, as there are curricula used within a state. Designing such a large set of assessments is a herculean task and likely far beyond the resources of any single state (although a consortium of states could potentially do so).

Curriculum-Relevant Models

In between the extremes of being inextricably tied to a specific curriculum and completely ignorant of any curriculum is another option: a set of “curriculum-relevant” models. Such models encompass a number of approaches, but the core idea is that assessment design, and support for interpretations, make intentional connections to curricula that teachers and students are using. When designed well and used as part of a coherent teaching, learning, and assessment strategy, these curriculum-relevant models can operationalize both the monitoring and signaling promise of state assessment systems.

Leverage Points: How Do We Make Assessments More Relevant to Classroom Curriculum?

There are a wide variety of ways states could consider approaching assessment and curriculum in more integrated ways, and how states approach curriculum relevance will depend on issues of capacity, teaching and learning policies, and the specifics of disciplinary standards implementation within each state.

Instead of an exhaustive list of curriculum-relevant options, it may be helpful to think about curriculum-relevant assessment models in terms of a number of features of an assessment system that can be more tightly or loosely connected to curriculum in ways that meet state goals around instructional relevance of state assessments (e.g., building on Marion’s (2018) idea of tightly or loosely coupled assessment systems).

Features of the assessment design, implementation, and use (discussed below) can all be more tightly or loosely coupled to curriculum. How these features intentionally connect state assessment and curriculum provide a range of ways that assessment systems can be designed for the “grey area” between curriculum-specific and curriculum-agnostic assessments.

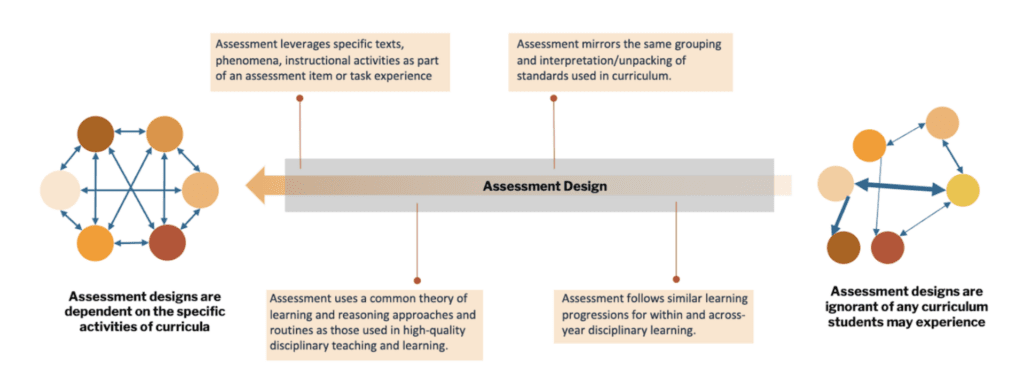

Assessment Design

One way states can approach curriculum relevance for their assessments is through tighter coupling between the content of state assessments and curricula, including both what is assessed and how students make their thinking visible. This offers an opportunity for states seeking to offer instructionally relevant assessments and associated professional learning to attend more closely to what and how students learn, using curriculum as a representation of student learning experiences.

- The ‘What’: Assessed Domain. Connecting the domain or disciplinary content of an assessment to curriculum is often what springs to mind when considering assessments that are more closely coupled with curriculum. In curriculum relevant systems, this tighter coupling could look like items and tasks that are directly related to the content experienced within one or more curricula (e.g., directly leveraging common texts or phenomena students have experienced or interacted with, or designing for intentional transfer); ensuring consistent interpretations of standards within instruction and and assessment (e.g., similar use of standards bundling, unpacking across assessment and instructional materials); and designing assessments to follow similar within- and across-year learning progressions for how students are expected to develop the targeted knowledge and skills.

- The ‘How’: Coherent Assessment and Instruction Experiences. State assessments can be designed to mirror the theories of learning–and associated routines and approaches to developing disciplinary knowledge and practice–underlying high-quality curricula within a given discipline (Shepard et. al., 2017). This design methodology often involves moving away from standalone, selected-response items and toward both on-demand and classroom-embedded performance tasks. Increasingly, curriculum-relevant models might also include tasks that engage students in similar sense-making and reasoning routines as those used to develop disciplinary proficiency within instruction, providing structures on an assessment for students to make their thinking visible that are familiar to students, and attend to the ways students have experienced transferring their understanding to new contexts.

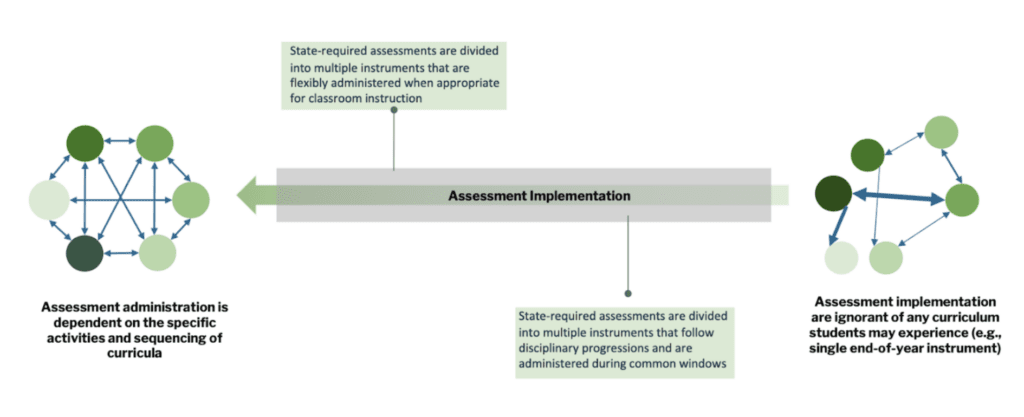

Assessment Implementation: Administration Structure, Timing, and Flexibility

In addition to connecting assessment content and experience to curricula is to more intentionally consider when students are assessed: in other words, to structure the assessed domain across multiple assessments, and allow for flexibility in administration. One option that might work across curriculum is to divide up the standards into very small groups, either individual standards or groups of standards, then allow teachers to select when to give assessments (e.g., Dadey, August, 2018; Dadey, March 2019). However, standards themselves might not be the best way to organize the domain, if, for example, students build toward end-of-instruction standards by revisiting components of individual standards several times over the course of a year or grade-band. More curriculum-relevant options that would take such development over time into account might consider structuring the domain in terms of specific learning progressions, complexity frameworks, and approaches to transfer that reflect how and when learning unfolds.

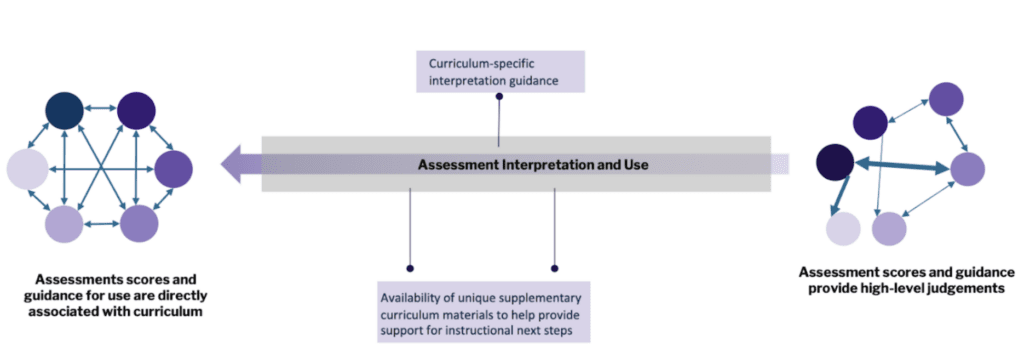

Assessment Interpretation and Use

Common supports for state assessment include interpretive guides and score reports. In curriculum-relevant models, the possible supports could include guides that connect state assessment to curricular materials, score reports that are customized to specific curricula, or even the creation of unique curricula materials that help provide differentiated instruction and/or more personalized curriculum modification and implementation based on assessment results. These supports can be particularly powerful when coupled with a curriculum-based approach to assessment literacy and professional development that helps educators make sense of assessment results in the context of curriculum implementation. Notably, this path toward curriculum relevance could be accomplished to a degree without wholesale assessment redesign–for example, by developing additional curriculum-based guidance for using existing assessment data. This approach could be accomplished in collaboration with local educators, serving to make it more feasible for states to execute while simultaneously serving to enhance curriculum-relevance through professional learning.

Looking Across Features

These connections are not all or nothing: each assessment component can be more tightly or loosely connected to curriculum (e.g., the assessed domain may be connected to curricula, but not so tightly that the assessments are “embedded” as part of the curriculum). Also, the parts of the assessment system called out here are not meant to be exhaustive, but rather valuable and salient examples. States may choose to focus on one or more of these or other features when considering curriculum-relevant assessments, and may decide that some features must be more tightly coupled with curriculum than others to meet their goals. It is also important to recognize that curricula and state assessment are just two nodes to consider within the larger system of state efforts aimed at improving teaching and learning in the state. For these efforts to achieve their potential, states and districts will need to consider how professional learning strategies, resource allocation, and other related policies are designed to work together with new approaches to assessment.

Curriculum Variation and Locus of Control

Moving toward more intentional couplings between curriculum and assessment may position assessments to be far more useful to teachers and students, but such shifts do not come without trade-offs and potential pitfalls that states would need to carefully consider. For example, any state seeking to design assessments with curriculum in mind will have to contend with the use of multiple curricula across the state. The state will need to consider the evolving landscape of high-quality materials, district adoption cycles, and the more informal variations in classroom use and implementation. States considering curriculum-relevant systems will need to design for a flexible and changing curriculum landscape–which will inevitably mean considering who is at the table when making decisions and setting policy for assessment and curriculum alike.

In many states, curriculum and assessment decisions are made by different entities: curriculum decisions are locally made while state assessment decisions live within the state education agency. These differences in decision making are contextualized by the evolving nature of curricular materials and district adoption, and by changing policy priorities at the state level. Approaching more curriculum-relevant state assessment designs necessarily opens the door to more shared ownership of assessment design and curriculum decisions. For this approach to be successful, these kinds of collaborations need to be an honest two-way street, with an emphasis on shared problem solving, a deep respect for teaching and learning, and a desire for assessment to authentically monitor and support student learning and performance. When approached carefully and collaboratively, more coherence between teaching, learning, and assessment can be a win for everyone involved, especially teachers and students.

Aneesha Badrinarayan serves as a Senior Advisor at the Learning Policy Institute, where she supports P-16 assessment system policy and practice. Prior to LPI, Aneesha served as Director of Special Initiatives at Achieve where she led Achieve’s science assessment portfolio in addition to other initiatives focused on more meaningful teaching, learning, and assessment for all learners.