Leveraging All State Education Data at a Crucial Time

A framework to guide states as federal oversight decreases

As the current administration has reduced its role in K-12 schools, it has decreased its support for critical data collection and research. At a time like this, it’s important that states can leverage all the data they have in the most powerful ways so they can maximize their insight into student and school performance. Our high-level framework paper can help them do this.

At the Center, we believe strongly in the value of assessment and accountability systems as an essential component of statewide school quality management, especially whenever it meaningfully complements the work that is happening locally. Two recent Center briefs make the case for statewide assessment and accountability systems.

The strategic collection, interrogation, and utilization of data at local and state levels plays an essential role in an effective management of school quality (our colleague Juan D’Brot discussed this in this blog and this report). When we say “data,” we mean both quantitative information—for example, assessment scores, indicator values, or programmatic outcome data—and qualitative/mixed-media information, such as reports about issues in assessment administration or descriptions of local differences in program implementation.

In our framework paper, we walk through key considerations for leveraging various available data sources so states can understand the nature and possible contextual drivers of student performance statewide. We developed this paper in consultation with representatives from select state education agencies that we work with; their contributions have notably shaped our vision. In this blog, we briefly highlight a few of the main ideas.

Key Steps for Navigating Data Analysis and Interpretation

In our paper, we specifically argue that state education agency teams should prioritize a diligent examination of points of convergence and divergence across data sources and strategically plan for these efforts throughout the year. To operationalize this approach, we outline key recommended steps for navigating the complexities of data analysis and interpretation:

- Adopting a systems-thinking perspective to contextualize student performance

- Identifying strategic priorities for data-driven insight

- Systematically identifying relevant data sources

- Conducting principled exploratory and explanatory analyses

- Clearly differentiating assumptions and hypotheses from data-driven evidence

- Documenting key insights and interpretational caveats for transparency

- Sharing best practices and insights collaboratively across offices

The last point about cross-divisional collaboration is key. Limiting conversations about assessment and accountability results only to the office responsible for these systems misses important opportunities to build a broader set of informed data users across the agency. These other users may have their own supplementary information, and their insights should inform discussions and understanding of results.

They may also interact with school and district staff on topics that could benefit from additional data-driven insights. Such offices typically include offices for school improvement or school support, research and evaluation, special populations, early learning, or career and technical education.

In the paper, we highlight three common use contexts where deeper analysis of student performance patterns and root-cause exploration is valuable:

- Public reporting of state assessment and accountability results

- School and district leader outreach around local school improvement

- Internal strategic planning for statewide systems and supports

Data analyses for these use cases should take the form of systematic, intentional data interrogation. This approach combines exploratory and explanatory methods, moving beyond simple descriptions of the current state to uncover underlying trends and actionable insights, incorporating root-cause analysis whenever feasible.

This cross-agency work is mostly internal, invisible to external stakeholders, but with important public products. These include press releases that provide high-level information about the data, detailed dashboard reporting systems, and, in some cases, notification or identification of schools that will receive additional support services and schools receiving special recognition. Planning for this work on an annual basis can help internal teams be prepared for conversations with the press, technical advisory committees, or local education agencies.

Four Core Sources of Data

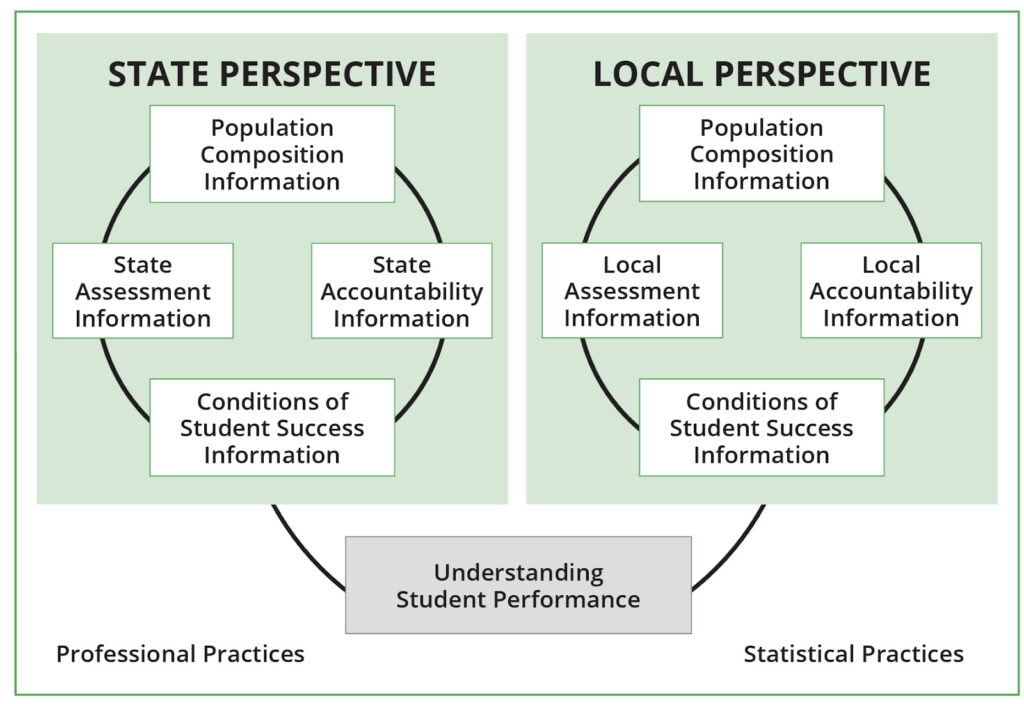

The framework that we present in the paper is anchored in the idea of understanding student performance through principled data interrogation with a variety of relevant data sources. As shown in the figure below, these include four core sources with both state and local components.

We then enumerate a variety of cross-cutting statistical and professional practices that are relevant for all analyses and walk through the specifics of each of these data sources.

For example, when trying to understand the nature and possible drivers of performance trends statewide, within regions, or for particular districts, it is essential to formulate initial hypotheses and then systematically investigate them with data at hand rather than relying on fragmented understandings based on anecdotal evidence. This is essentially good evidence-based reasoning within data science.

Similarly, it is important to utilize multiple variables simultaneously in analyses, rather than a single variable at a time, so as to understand effects at the intersection of different characteristics. This is essentially good multivariate data analysis and visualization practice but easy to overlook amidst often limited resources as well as competing pressures and timelines within a state education agency.

To bring these ideas together, we present three use cases for the three different contexts we mentioned at the outset. For example, one of the use cases describes a situation in which principled data interrogations within an agency uncovered how local curricular choices had impacted student engagement and, as a result, academic performance for a particular subgroup after refuting a few alternative hypotheses through data. This discovery was only possible through cross-agency conversations and in discussion with district staff.

Resources for Additional Insight

Our framework paper focuses specifically on best practices for leveraging data. There are of course other useful resources out there that provide complementary insight. For example, on one end of the spectrum, there are select packages and routines in R, Python, SAS, SPSS, and other software environments for executing the data analysis work.

This work can also be supported with enhanced power and code-writing support through modern AI environments such as Microsoft Azure, Databricks, or Claude, to name but a few. These platforms often provide scalable infrastructure and advanced tools that make traditional statistical computations more robust and efficient.

On the other end of the spectrum, this report walks through a wealth of publicly available resources that provide high-level guidance around data strategy, management, and analysis.

Our framework is situated between specific computational routines and very broad strategic guidance. The core ideas in our framework are designed to be useful for guiding thinking and internal planning in teams across state education agencies or, perhaps even better, to inspire these teams to share their best practices with colleagues within professional communities of practice.

We hope that readers find this framework stimulating and useful for discussing, reviewing, and refining internal practices at a state agency. We will expand this framework through a broader toolkit for agency leadership and provide updates in a future blog. To this end, we welcome any suggestions or resources that you would be willing to share, which we would love to include in the toolkit with proper attribution!