Tongue Tied by Testing Terminology

Collaborating to Fill a Void in Information to Support the Effective Use of Educational Assessment

City Year is an American education nonprofit organization founded in 1988 and dedicated to helping students and schools succeed. The organization partners with public schools in 29 high-need communities across the U.S. and through international affiliates. The Center for Assessment has been partnering with City Year for several months to help increase the assessment knowledge and skills of City Year staff. We’ve had the privilege of working with Will Scarbrough, the head of Student Analytics for City Year, on this project. We’re thrilled to host this guest post from Will, in his words, about “sharing an assessment reference document for mere mortals.” Here, he writes about the challenges of testing terminology.

“Why is SGP [student growth percentile] a better solution to measure growth in this case?” asked our data manager from San Antonio on a video call. As the head of student analytics for City Year, I did not have an answer that was clear enough. “Some of my partner teachers don’t understand what an SGP is; how can I explain this to them?” We needed a better foundation on which to stand, both clarifying the meaning of key terms and developing a better way to explain complicated assessment concepts and results.

The Limitations of Assessments Already in Use for Measuring Student Growth

Defining and tracking student growth in mathematics and English language arts are two of the key indicators we follow in every school where we work. However, we are constrained to use the assessments already used by those districts. We also must choose what measurement best fits with how City Year AmeriCorps members work with students in that school. Our theory of change is centered around student agency, identity formation, and durable skills. Some of those durable skills are in math and English. Measuring well across 43 school districts requires a clear approach to the available tools to measure that growth.

In a world where a complicated Excel formula can be discovered in seconds and the definition for any term can be referenced instantly, this space felt strangely empty. Is there a Bermuda triangle of Google searches for certain complex topics?

Have We Become too Comfortable With Technical Testing Terminology?

You will find long-scrolling web pages of vocabulary about assessments, yet without context or curation. A search for a particular term – say “normal curve equivalent” – yields reams of deeply technical definitions that go well beyond use in education. Assessment vendor materials sometimes play fast and loose with marketing language that can muddle your understanding of assessment terms. And books on the subject seem to be written by one professor for another, with little regard for an educator.

For testing terminology, edglossary.org is a great place to start yet it does not include some of the more technical data terms that we need to understand. We could not find an approachable and precise reference to guide choices of measures and support conversations with schools. So, along with the Center for Assessment, we set out to make one.

Our data staff in cities across the country kicked us off by suggesting a reference document was the right starting point and gave us feedback as we developed the materials. The Center for Assessment professionals checked everything for accuracy – here is a selection from our straightforward explanations of common student growth measures:

Student Growth Percentile (SGP) – A measure of student academic growth as compared to similar students. Typically, “similar students” are defined as students in the same grade, with similar starting scores. A student with an SGP of 30 scored higher than 30 percent of students with similar score histories.

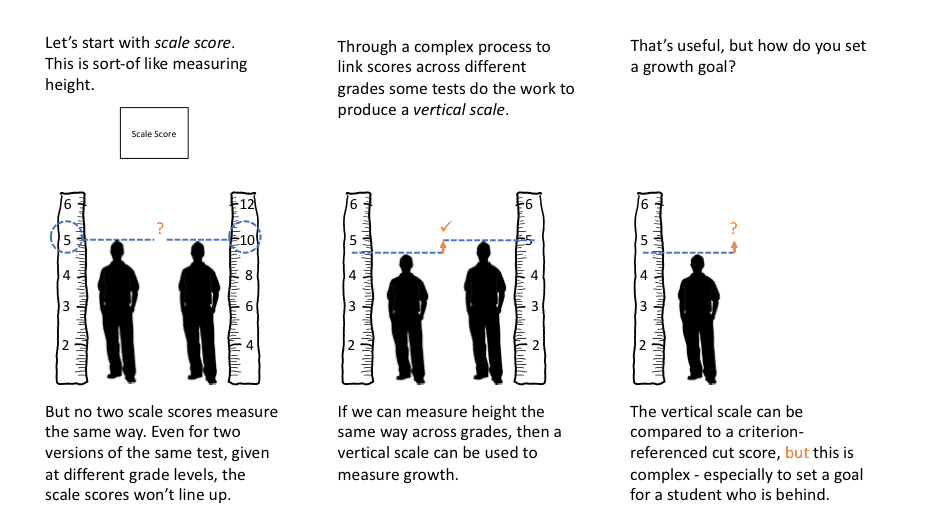

Student Growth Along a Continuous Scale (Vertical Scale) – A way to measure student growth in a content area, within and across grades, using scale scores achieved over continuous administration of progressively more difficult tests. This kind of growth measure is made possible through the development of a single test scale across all grades. The difficulty of these tests generally increases as students progress through grade-level material, allowing us to determine growth simply by comparing students’ scale scores as they progress through the tests.

Also, to help visualize how a vertical scale supports the measurement of growth, I developed the following graphic:

I come to this work with analytics and engineering expertise but without deep training in assessments. I enjoyed the opportunity to swim in these waters. I hope I can contribute by enhancing the clarity and confidence of these conversations for you.

The documents we created, Academic Assessment Terminology and Test Scores, are licensed under a Creative Commons license so that you are free to reuse and repurpose the materials, as long as you give credit, keep this license, and note if changes have been made. (CC by 4.0)